I use Capybara for testing, with chrome extension. From time to time Ubuntu asks to update chrome to a newest version. This breaks compatibilty with chromedriver version for testing purposes.

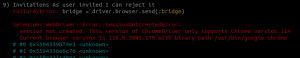

You experienced an error like this:

In this link you have all webdriver versions matching with your actual browser version.

I downloaded and installed to solve the problem. Here the commands:

wget https://edgedl.me.gvt1.com/edgedl/chrome/chrome-for-testing/116.0.5845.96/linux64/chromedriver-linux64.zip unzip chromedriver-linux64.zip sudo mv chromedriver-linux64/chromedriver /usr/local/bin/ sudo chown root:root /usr/local/bin/chromedriver sudo chmod +x /usr/local/bin/chromedriver rm -r chromedriver* |

Hope it workd for you.

Happy coding!!